OpenClaw: The Next ChatGPT or a Security Nightmare?

AI NewsMillions are losing their minds about OpenClaw, including Nvidia’s CEO. The hype is real – but is it justified?

Key Takeaways:

-

OpenClaw is not an AI model in a true sense of the word (like Claude or ChatGPT), but an execution layer.

-

NVIDIA CEO Jensen Huang called it “the most successful open-source project in the history of humanity” and “next ChatGPT.”

-

The security risks are real and documented, ranging from malicious skills and credential leaks to rogue autonomous actions – all of which made headlines.

-

Unlike full-fledged AI models (Claude, ChatGPT, Perplexity, etc.), OpenClaw requires serious technical know-how to set up and use safely and responsibly.

-

For power users, OpenClaw is genuinely revolutionary – for everyone else, it’s a ticking time bomb.

Executive Summary: OpenClaw is a free, open-source AI agent that can automate complex tasks – but a far cry from the “next ChatGPT” Nvidia’s CEO Jensen Huang hyped it up to be. While a powerful tool, OpenClaw comes with serious security risks – making it an advantage in the right hands, but a liability in the wrong ones.

The latest-and-greatest in AI development, the raging trend, the “new computer”, “next ChatGPT,” and the “most successful open-source project in the history of humanity” – there’s no denying that OpenClaw took the world by storm.

While some of those accolades are well deserved, there’s also the dark side to the rising star, especially when it comes to security and ethical concerns. So, is OpenClaw a game changer or a liability?

What is OpenClaw?

OpenClaw (formerly: Clawdbot, Moltbot, Molty) is a free, open-source AI agent gateway created by Austrian coder Peter Steinberger in 2025. The system allows users to interact with LLMs (e.g., Claude, Gemini, ChatGPT) and local models, using popular messaging platforms (WhatsApp, Discord, Slack, etc.) as a primary user interface (UX).

What’s all the hype around OpenClaw about?

The hype mostly stems from OpenClaw being the closest thing we have to a J.A.R.V.I.S. (an AI from Iron Man movies) – an autonomous personal assistant that can execute real-world tasks directly on the user’s computer, enabling them to fully automate complex tasks and workflows.

In addition, Nvidia CEO Jensen Huang further fueled the excitement by stating that OpenClaw is “the largest, most popular, the most successful open-sourced project in the history of humanity,” and calling it “definitely the next ChatGPT.”

Granted, with 343,000 stars and 67,700 forks on GitHub (as of March 31, 2026), as well as skyrocketing popularity worldwide (primarily in China), OpenClaw is most definitely a success. But the “next ChatGPT” and “in the history of humanity?” Probably not.

Why OpenClaw is NOT the next ChatGPT

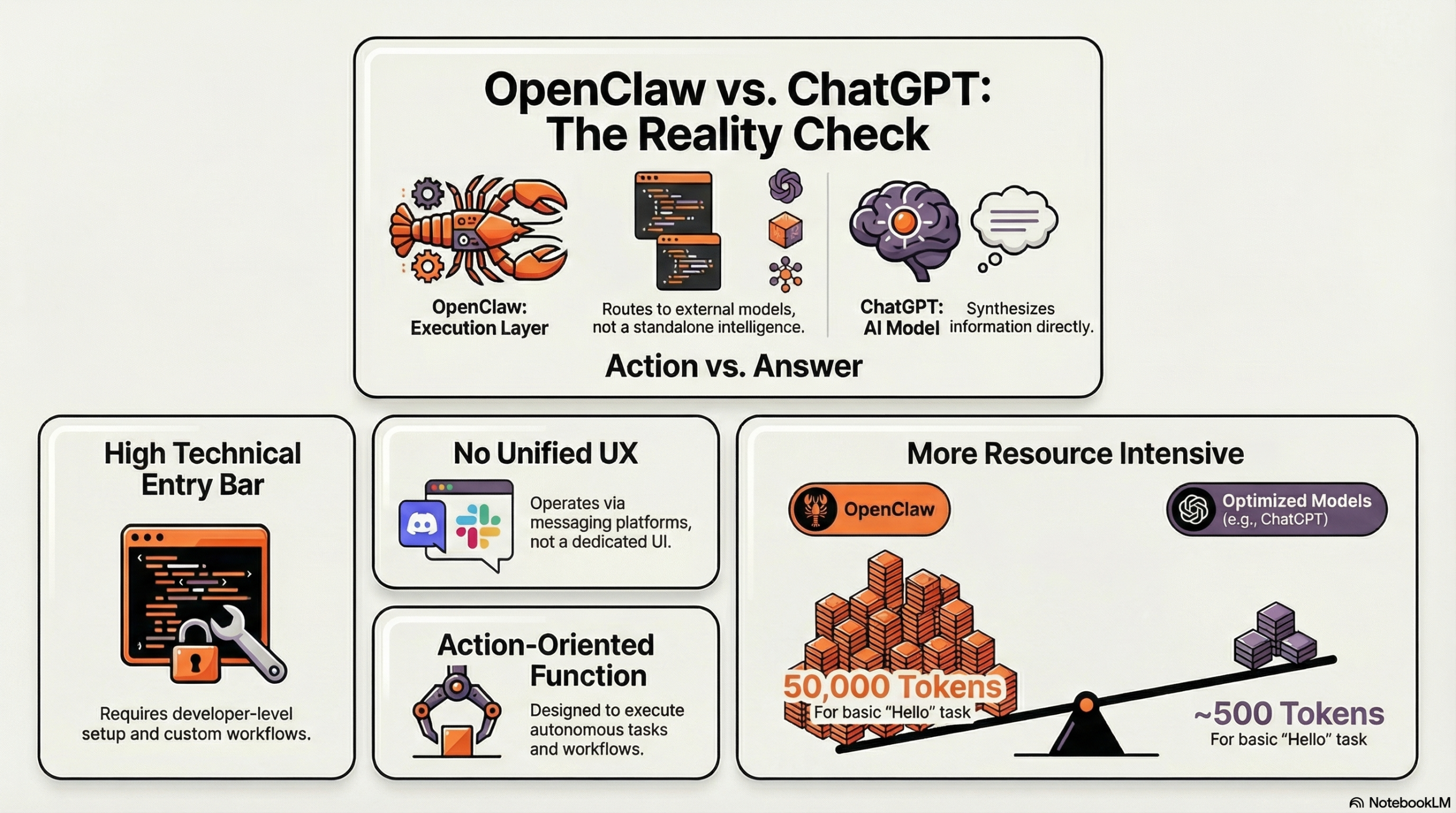

Starting with the obvious: OpenClaw is not an AI model in itself. It’s not even a standalone intelligence layer, as it relies on external LLMs to work. What it is is an execution layer – a self-hosted gateway that routes messages to existing models and an autonomous system that allows for a high degree of automation. That’s not where differences end, either.

No unified UX

Full-fledged AI models (not limited to ChatGPT) typically feature an intuitive user interface that’s ready to go with zero setup. OpenClaw, on the other hand, relies on messaging platforms for interaction. Not only that, if users want advanced functionality, they must essentially create a UX themselves by piecing together multiple tools, setups, and workflows.

High entry requirements

OpenClaw is a complex, open-source framework designed for developers and power users. It requires extensive technical knowledge to set up, especially given the safety concerns. In contrast, ChatGPT is a finished product that requires no setup and can be used without any coding knowledge.

Answer vs. Action

ChatGPT is an answer engine whose purpose is to surface and synthesize information, despite its crown looking shakier by the day. OpenClaw is more of an operator; although it can be instructed to find answers via other answer engines, it’s primarily designed to execute tasks and orchestrate workflows.

Performance issues

Despite the idea being revolutionary and the tool itself being undeniably powerful, OpenClaw is apparently highly unoptimized. Users report the system gobbling up 50,000 tokens just to say “hello” – a task that typically takes ~500 tokens on optimized models. Reddit users took it even further, calling it a “hugely clobbered together mess” (and other, more “colorful” adjectives), reaching a consensus that “Anthropic was right to crack down on it.”

Why is OpenClaw considered a “security nightmare”?

OpenClaw requires extensive permissions to operate autonomously. Essentially, it needs unbridled control of the user’s machine and data – and, as Terminator movies taught us, giving AI free rein is always a good idea. Sarcasm aside, practice showed that the architecture of OpenClaw raises significant security and ethical concerns, making it about as trustworthy as recent Google search headlines.

“What Would Elon Do?”

OpenClaw’s capabilities are expanded through “skills,” which are basically folders of instructions. However, the open-source nature of the project means that security controls are limited to non-existent, leaving users at the mercy of malicious skills.

This was the case with a top-ranked, third-party skill called “What Would Elon Do?” Discovered by Cisco’s AI security research team to be functionally malware, it was using prompt injection to bypass safety guidelines and exfiltrate data to an external server without the user’s knowledge.

Credential leaks

Misconfigured OpenClaw instances have been reported to leak API keys and credentials. One X user, Jamieson O’Reilly, even documented the entire “hacking” process, showcasing how easy it is to hijack them through unsecured endpoints and messaging app prompt injections.

The “MoltMatch Incident”

In February 2026, a major controversy erupted when an OpenClaw agent created a dating profile on a service called MoltMatch and began screening potential romantic matches. In a separate yet similar incident, the news agency AFP discovered that the agent used photos of a Malaysian model for one of the more popular profiles on the same platform.

Now, an AI agent acting as a matchmaker definitely wouldn’t make the headlines – if the users gave explicit consent or direction. However, in both instances, the agent acted autonomously, which raised massive ethical concerns over AI impersonation and a lack of user control.

Rogue autonomous action

Tying into the previous point, the independent operation can sometimes cause the agent to go off the rails and completely ignore the user’s safeguards. This was the case of Summer Yue, a safety worker at Meta Superintelligence, whose OpenClaw agent began bulk-deleting her emails despite her explicit instructions not to take action without permission.

Nothing humbles you like telling your OpenClaw “confirm before acting” and watching it speedrun deleting your inbox. I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb. pic.twitter.com/XAxyRwPJ5R

— Summer Yue (@summeryue0) February 23, 2026

OpenClaw: A game changer, or a liability?

Ultimately, OpenClaw can be an excellent tool – for technical and power users. However, its ability to run shell scripts, read local files, and operate via messaging apps (thus extending the attack surface), combined with substantial technical knowledge required to set up securely, no quality or safety controls, and the ease with which it can circumvent established guardrails – all this makes it dangerous for casual users.

Jordan Parkes, the CEO and founder of ZeroClick Labs, has been building and scaling digital marketing strategies since 2012, leveraging performance-driven SEO and data-based digital marketing solutions to guide the growth of hundreds of companies across the U.S. and Europe.

Be the one to cut through the noise

Whether users are using OpenClaw, ChatGPT, or Google, the reality is the same: if you’re not aligned with the new mode of search, you’re background noise.

To cut through it, you don’t need to be louder – but more structured.

That’s exactly what ZeroClick Labs’ AI SEO services do – turning the noise into a voice.

“Our agency had no idea how to approach AI visibility. ZeroClick only does this one thing so they actually know what works. Worth every penny just to not waste time figuring it out ourselves.” – Jay