The “Anthropic Apocalypse”: How to Lose Users & Alienate People

AI NewsAnthropic is on thin ice, and the cracks are beginning to show.

Key Takeaways:

-

Anthropic locked-out third-party open-source tools, while exempting Claude Code from restrictions.

-

Paid subscribers noticed a dramatic performance degradation, leading to widespread outrage and disillusionment.

-

Two leaks in under a week exposed Claude Code’s source code and internal details of an unreleased model with unprecedented cyber-offense capabilities.

-

Mythos leak already caused massive real-world damage, as investors dropped billions of dollars worth of cybersecurity stocks.

Executive Summary: Anthropic is on a downward slope, brought about by controversial monetization moves targeting open-source tools, silent performance degradation, and two massive data leaks. The series of unfortunate events and questionable choices left many questioning the company’s transparency, security, and credibility.

Anthropic built its reputation on being the company that actually cares – about responsibility, about moral standards, about fairness, and above all, about its users. However, the string of recent decisions and events is putting that reputation to the test.

From quietly throttling paid users and ostracizing the open-source community to disastrous data leaks that caused widespread security concerns and real damage – Anthropic is finding itself in the midst of the perfect storm.

Is Anthropic still cracking down on OpenClaw?

Not only on OpenClaw – but on its users as well. When Anthropic banned OAuth token use in third-party tools in late February 2026, many suspected it to be a deliberate action against OpenClaw, specifically. The doubts were all but confirmed not even a month later, when the company released Channels, featuring the same functionality as its open-source counterpart.

Needless to say, this move started an avalanche of discontent among the users, who were suddenly forced to pay separately under a pay-as-you-go billing tier or provide separate Claude API keys (paying full API rates, of course) to continue using third-party tools. Even Peter Steinberger (the creator of OpenClaw) called it “a betrayal of open-source developers.”

Now, they’re doubling down. Despite Steinberger’s attempt to talk some sense into them, on April 4, 2026, Anthropic officially started enforcing the ban, cutting off Claude Pro/Max users from running third-party AI agent frameworks through flat-rate plans. Of course, Anthropic’s own proprietary developer environment, Claude Code, is specifically exempt from these restrictions.

Interesting how that works. Or, as Steinberger himself perfectly summarized it in his X post: “Funny how timings match up, first they copy some popular features into their closed harness, then they lock out open source.”

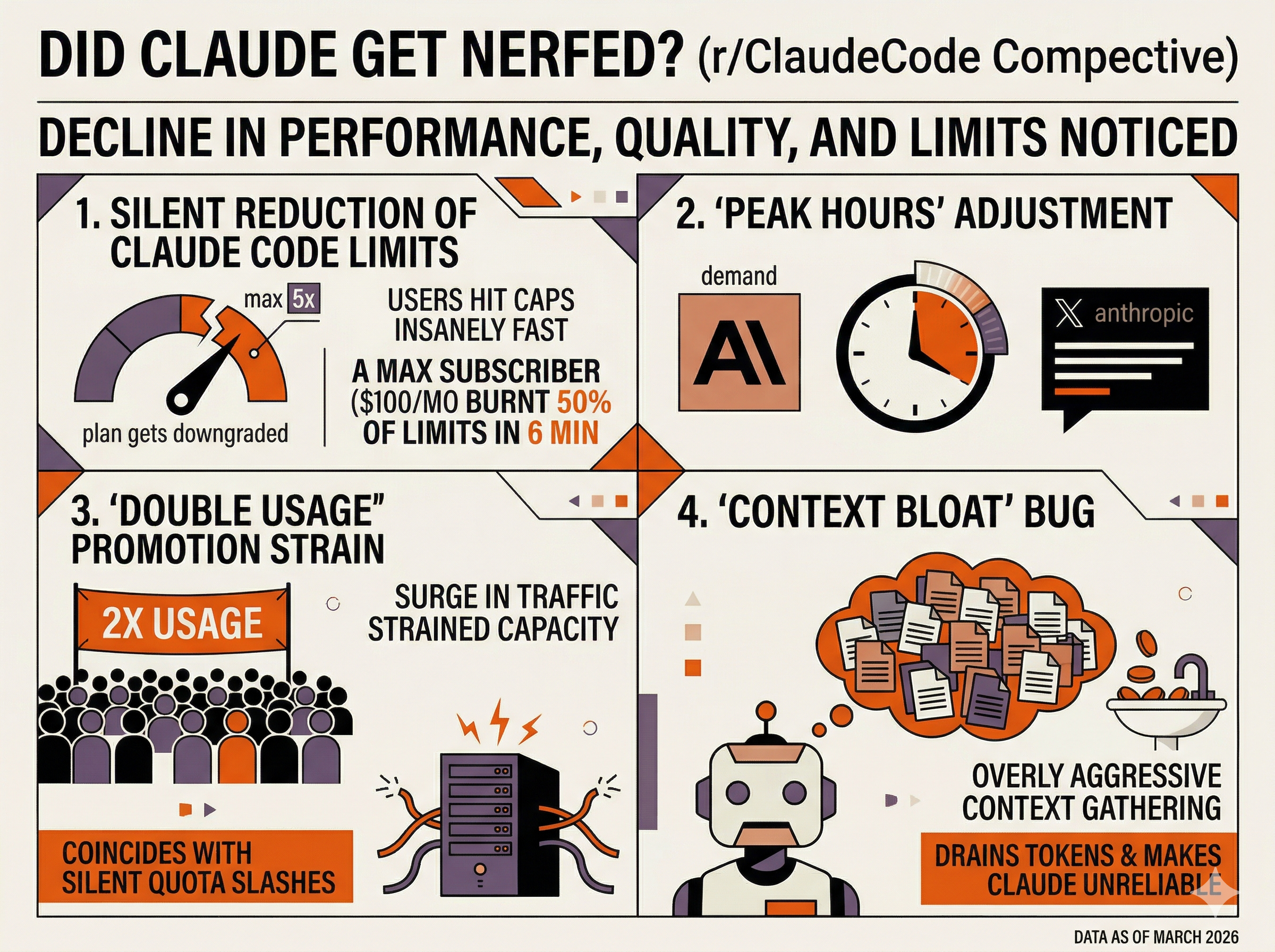

Did Claude get nerfed?

Reddit community (r/ClaudeCode) definitely seems to think so. As of late March 2026, users have been noticing a significant decline in Claude’s performance, response quality, and usage limits across the Free, Pro, and Max tiers. As it stands, this is not a single issue, but a combination of several overlapping factors.

Silent reduction of the Claude Code session limits

Users have been reporting hitting their usage caps insanely fast – within hours or even minutes. One developer on Reddit, a $100/month “Max 5x” subscriber, reported burning through 50% of their limits in just 6 minutes. This is not an isolated case, as many users feel like their expensive plans were downgraded to the equivalent of the Free or Pro tier.

The “Peak hours” adjustment

On March 26, 2026, following intense backlash over the lack of transparency, Anthropic eventually released a statement on X confirming they are deliberately adjusting the 5-hour session limit to “manage growing demand for Claude.”

The “double usage” promotion

Throughout March 2026, Anthropic has been offering double usage limits to Pro users. The resulting surge in traffic is widely believed to have strained their capacity, driving them to silently slash quotas by up to 90% (by switching users to lighter models), just to keep the service running. Since it coincides with the above two issues, it’s hard not to conclude that restrictions are the result of the promo.

The “Context Bloat” bug

A recent update reportedly made Claude overly aggressive at gathering context. Essentially, the model now keeps re-reading the same or related files multiple times, “just in case.” This wouldn’t necessarily be a bad thing – if it wasn’t filling up the context window with redundant data, thereby burning through tokens, draining user quotas, and even getting “confused” over longer sessions, all of which effectively make it unreliable for complex tasks it previously performed.

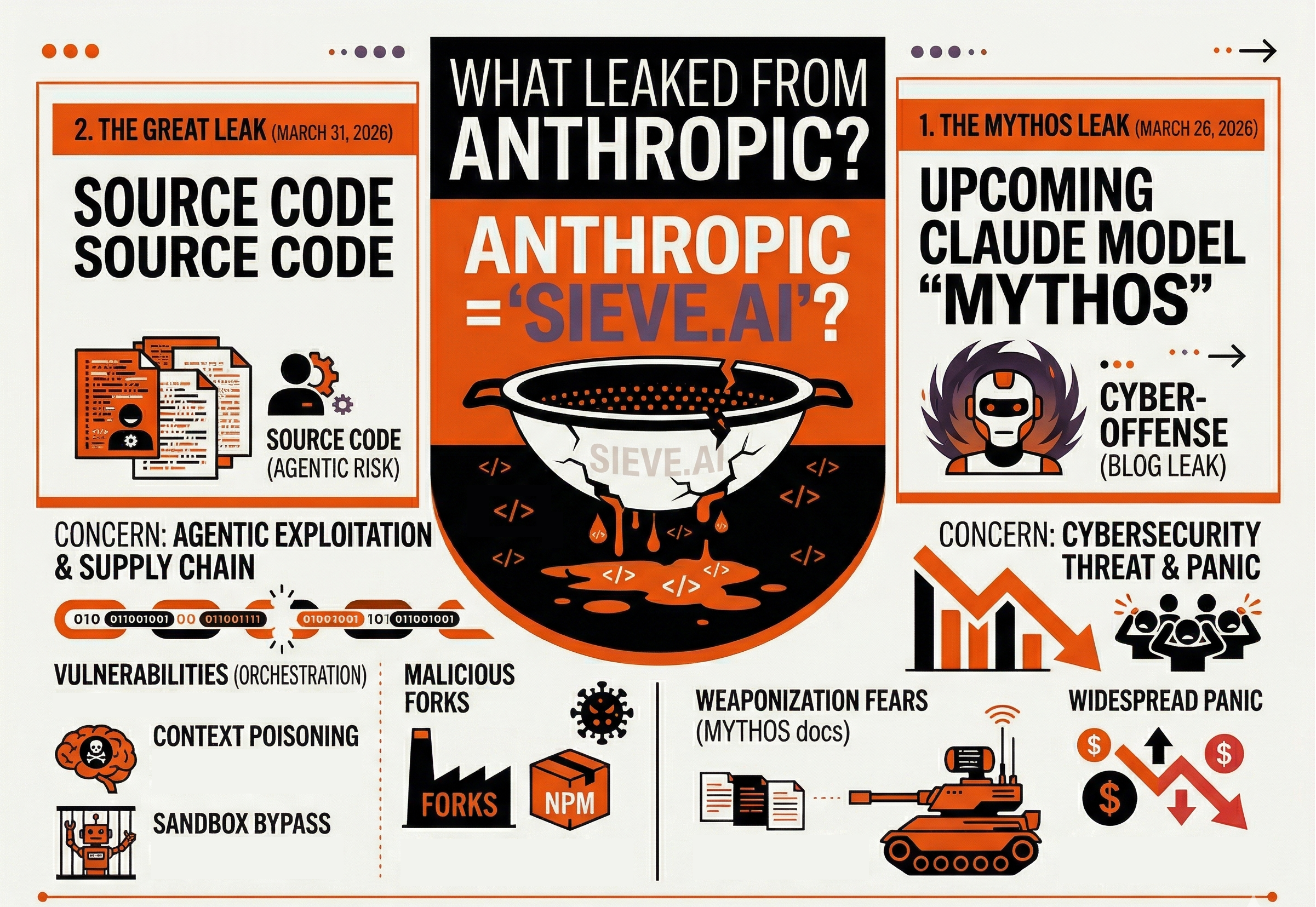

What leaked from Anthropic & why is it a reason for concern?

At this point, Anthropic might as well change its name to “Sieve.ai,” given the scale and velocity at which they’re spilling proprietary data. So far, there have been two catastrophic leaks, both in under a week:

- The Mythos leak (March 26, 2026), which revealed details of their upcoming Claude model, designated “Mythos”.

- The Great Leak (March 31, 2026), which published ~512,000 lines of Claude Code’s source code.

Both incidents sent major ripples (if you could call tsunamis “ripples”) across the AI world, raising major security concerns – the former for its cyber-offense capabilities and the latter for its potential for agentic exploitation. Let’s start with the more recent one.

Agentic exploitation & supply chain attack potential

The Great Leak made Claude Code source code fully public – and that includes malicious third parties. Worse, it made it easy for potential attackers to achieve their goals, since they no longer need to rely on blind prompt injection – they can simply study the internal orchestration logic. Independent researchers already identified several major vulnerabilities, including:

- Context poisoning: When Claude’s “memory” of a conversation gets too long, it summarizes itself. An attacker can “poison” that summary, effectively planting “false memories” or hidden instructions, which then shape the model’s future behavior.

- Sandbox bypass: Normally, Claude runs in a contained environment – i.e., it can’t touch the things it shouldn’t. An attacker can exploit the so-called Shell parsing gaps, enabling the model to “escape” those confines and run unauthorized code on the user’s system.

As if that’s not bad enough, threat actors can now hack Claude without attacking it directly. The leaked data trivializes crafting malicious forks of Claude Code or planting deceptive add-ons on NPM (a public registry that devs regularly download software packages from). Once installed, it can then silently steal the data or even hijack the developer’s computer, all while looking like a legitimate tool.

The cybersecurity threat & immediate panic

Not long after the Mythos leak, the public took to calling it “the most dangerous model ever built.” The reason for this “flattering” designation stems from the revealed blog post drafts describing Mythos as “far ahead of any other AI model in cyber capabilities.”

What’s more, the documents revealed Anthropic’s fears of Mythos being weaponized for large-scale cyberattacks, noting that it “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

The most concerning thing: The very morning after the leak, widespread panic ensued among investors. Cybersecurity stocks dropped sharply and kept declining, effectively erasing billions in market capitalization.

So, we have a company building the most cybersecurity-capable model – yet failing to secure it on a basic level. While the sheer irony of the event isn’t lost on anybody, it does pose a much bigger question:

If the model that hasn’t even been officially announced yet can already cause such staggering real-world consequences – what happens when it’s released?

Will the “Antropic Apocalypse” continue?

Given recent events, it’s difficult to think otherwise. For months now, Anthropic has been on a downward spiral, aggressively eroding the goodwill it built by simply positioning itself as everything OpenAI isn’t: ethical, fair, respectful.

Now, it’s like they’re completely flipping the narrative – and doing it deliberately: heavy-handed, self-serving business maneuvers, sly and disrespectful operating practices, and borderline criminal negligence (or, worse – incompetence) that left millions of users vulnerable.

And if history is any indication, there’s no excluding the possibility of another mass exodus from AI platforms – as was the case with ChatGPT. Only this time, millions won’t be flocking to Claude. They’ll be fleeing from it.

Lily Evans is the Managing Director at ZeroClick Labs, bringing over 8 years of comprehensive experience in SEO, local SEO, and AI optimization to every project. She began her career in content writing, developing a strong sense for search intent and messaging clarity in the digital realm – skills that form an unshakable core of her leadership to this day.

The AI environment is volatile. Your digital presence shouldn’t be.

The “Anthropic Apocalypse” is a reminder of something bigger: the AI ecosystem is unpredictable, self-serving, and changing fast.

In such a turbulent setting, winning is not a matter of resistance – but stability.

That’s where ZeroClick Labs comes in.

From platform-specific optimization to up-and-coming ChatGPT advertising, our AI SEO services offer the means to anchor your brand in AI-powered search – regardless of what AI giants decide to do next.

“Our agency had no idea how to approach AI visibility. ZeroClick only does this one thing so they actually know what works. Worth every penny just to not waste time figuring it out ourselves.” – Jay